Here, we notice the webcam on the right side and the drawing canvas on the left side. Now, if we run the project in our browser, we’ll get the result as displayed in the demo below: For that, we’re going to use conditional rendering, as directed in the code snippet below: Lastly, we need to add the emoji image to the display to signify a hand pose detection result. The overall coding implementation is provided in the code snippet below: Add Emoji Display to the Screen We apply the gesture mapping as well as the confidence index to detect the accurate gesture and set the emoji. We’re going to make use of the GestureEstimator method from the fingerpose package in order to detect hand gestures. Next, we need to update our detect function with a gesture detecting function.

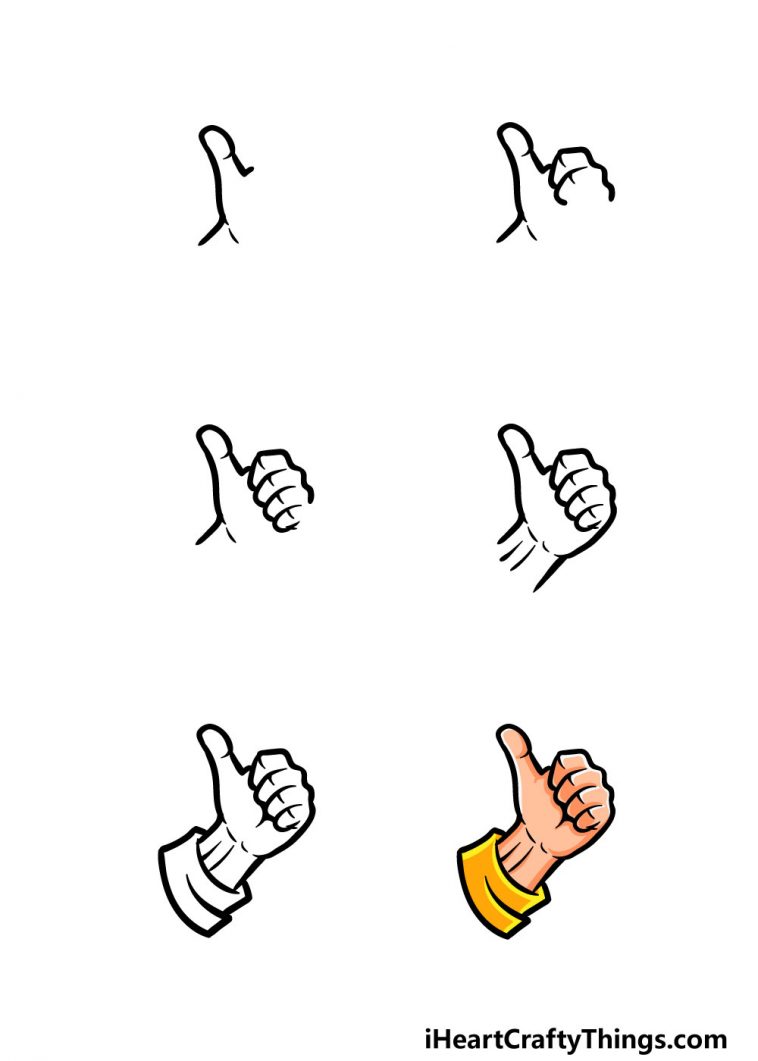

Then, we need to import the thumbs-up emoji image and the fingerpose library as fp as shown in the code snippet below: Update the detect function Adding Statesįirst, we’re going to define a state using the useState hook, which will enable us to handle the thumbs up image status as directed in the code snippet below: Import emoji image and fingerpose library For that, we’re going to make use of the fingerpose library. Now, it’s time to detect the thumbs up hand gesture. The coding implementation is provided in the code snippet below: Detecting thumbs-up This enables the detect function to run every 10 milliseconds. Next, we need to call this detect function inside the runHandpose method under the setInterval method. Then, we start estimating the hand pose using the estimateHands method provided by the hand module that takes video as a parameter, as shown in the code snippet below: Then, we need to set the canvas width and height based on the dimensions of the video: We then get and set the video properties using webcamRef that we defined earlier: First, we detect the webcam and grab the video properties to handle the video adjustments, as directed in the code snippets below: Here, we’re going to create a function called detect, which will handle the hand pose detection. In order to load the hand pose model upon starting the app, we’re going to call it inside the useEffect hook, as shown in the code snippet below: Detect Hand Pose The overall code for this function is provided in the code snippet below: In this step, we’re going to create a function called runHandpose, which initializes the hand pose model using the load method from the handpose module.

The style for both the Webcam and canvas components is provided in the code snippet below: Loading the Hand Pose model The canvas component, with its prop configurations, are provided below: The canvas component enables us to draw anything that we want to display in the webcam feed. Now, we need to add the canvas component just below the Webcam component. The coding implementation is provided in the code snippet below: Using this, we can stream the webcam feed in the canvas, also passing the refs as prop properties. Next, we need to initialize the Webcam component in our render method. First, we need to create reference variables using the useRef hook, as shown in the code snippet below: For that, we’re going to make use of the Webcam component that we installed and imported earlier. Next, we’re going to setup our webcam and canvas to view the webcam stream in the web display. Next, we need to import all the installed dependencies into our App.js file, as directed in the code snippet below: Setup webcam and canvas react-webcam: This library component enables access to your machine’s webcam in the React project.This package delivers the hand pose TensorFlow model.The core TensorFlow package based on JavaScript.We can use either npm or yarn to install the dependencies by running the following commands in our project terminal: The dependencies we need to install in our project are the posenet model, tfjs TensorFlow, and the react-webcam. Let’s get started! Setting up dependenciesįirst, we need to install the necessary dependencies in our project. Detecting hand poses using a pre-trained hand pose model.Creating a canvas to stream video from a webcam.Like in the previous tutorial, we are going to make use of a webcam for gesture detection and canvas for drawing or displaying the result of the detection. We are going to detect the hand poses and gestures using the TensorFlow.js library. In this tutorial, we’re going to focus our pose algorithm on a smaller area-human hands. Here, we’re going to detect hand gestures using the library.

Our previous tutorial used this library for real-time human pose estimation. In this tutorial, we’ll continue learning the various use-cases of the TensorFlow.js library.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed